Vimeo has officially joined the ranks of YouTube, TikTok, and Meta by introducing a system for labeling AI-generated content. This new feature, announced on Wednesday, requires creators to disclose when their content has been created or manipulated using artificial intelligence (AI). This initiative aims to maintain transparency and prevent viewers from mistaking AI-generated content for real events or authentic footage.

Enhancing Transparency with AI Content Labels

Vimeo‘s latest updates to its terms of service and community guidelines mandate that creators clearly label AI-generated, synthetically created, or manipulated videos. This move is particularly significant as the lines between real and AI-generated content become increasingly blurred with the advancement of generative AI technologies.

While Vimeo does not require creators to label content that is obviously unrealistic—such as animated videos, those with evident visual effects, or minor AI production assistance—any content depicting real people, places, or events altered by AI must be disclosed. For example, videos showing celebrities saying or doing things they haven’t in reality or altered footage of real events must carry an AI content label.

Implementation and Future Plans

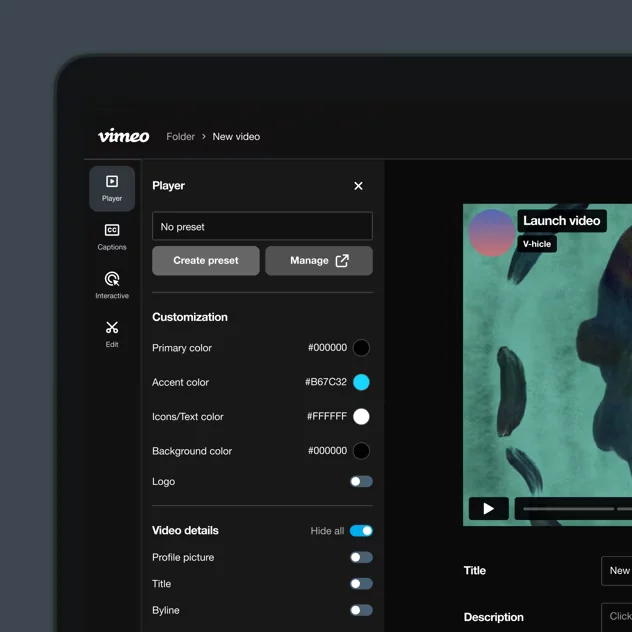

A distinctive label will now appear at the bottom of such videos, indicating that the creator has voluntarily disclosed the use of AI. Creators can select a checkbox for AI-generated content when uploading or editing a video, specifying whether AI was used for audio, visuals, or both. This label is currently dependent on the creators’ honesty, but Vimeo is working towards an automated system to detect and label AI-generated content automatically.

Philip Moyer, Vimeo‘s CEO since April, emphasized in an official blog post that the company’s long-term goal is to develop reliable automated labeling systems. These systems aim to enhance transparency and reduce the burden on creators by automatically detecting AI-generated content.

Protecting User-Generated Content

Moyer has previously articulated Vimeo’s stance on protecting user-generated content from being exploited by AI companies. The platform prohibits generative AI models from being trained on videos hosted on Vimeo, aligning with similar policies from other major platforms like YouTube. Neal Mohan, CEO of YouTube, has also stated that using videos from their platform to train AI models, including OpenAI’s Sora, violates their terms of service.

The Need for AI Content Labels

The introduction of AI content labels is a response to the growing challenge of distinguishing real content from AI-generated material. As AI tools become more sophisticated, the potential for creating realistic yet fake videos increases. This can lead to misinformation and confusion among viewers. By requiring AI content labels, Vimeo aims to foster a more transparent and trustworthy environment for its users.

This move also aligns with broader industry trends. YouTube and TikTok have implemented similar measures to ensure transparency in AI-generated content. These platforms are recognizing the importance of maintaining user trust and preventing the spread of misleading information.

Conclusion

Vimeo‘s introduction of AI content labels marks a significant step towards greater transparency in the video creation industry. By requiring creators to disclose AI-generated content, Vimeo is helping to ensure that viewers are not misled by synthetic media. This initiative, coupled with future automated detection systems, reflects Vimeo’s commitment to maintaining a trustworthy platform and protecting user-generated content.

As AI continues to evolve, the need for such measures will only grow. Vimeo’s proactive approach sets a precedent for other platforms to follow, ultimately contributing to a more transparent and reliable digital media landscape.

More News: Artificial Intelligence